Never look at the sun without eye protection!

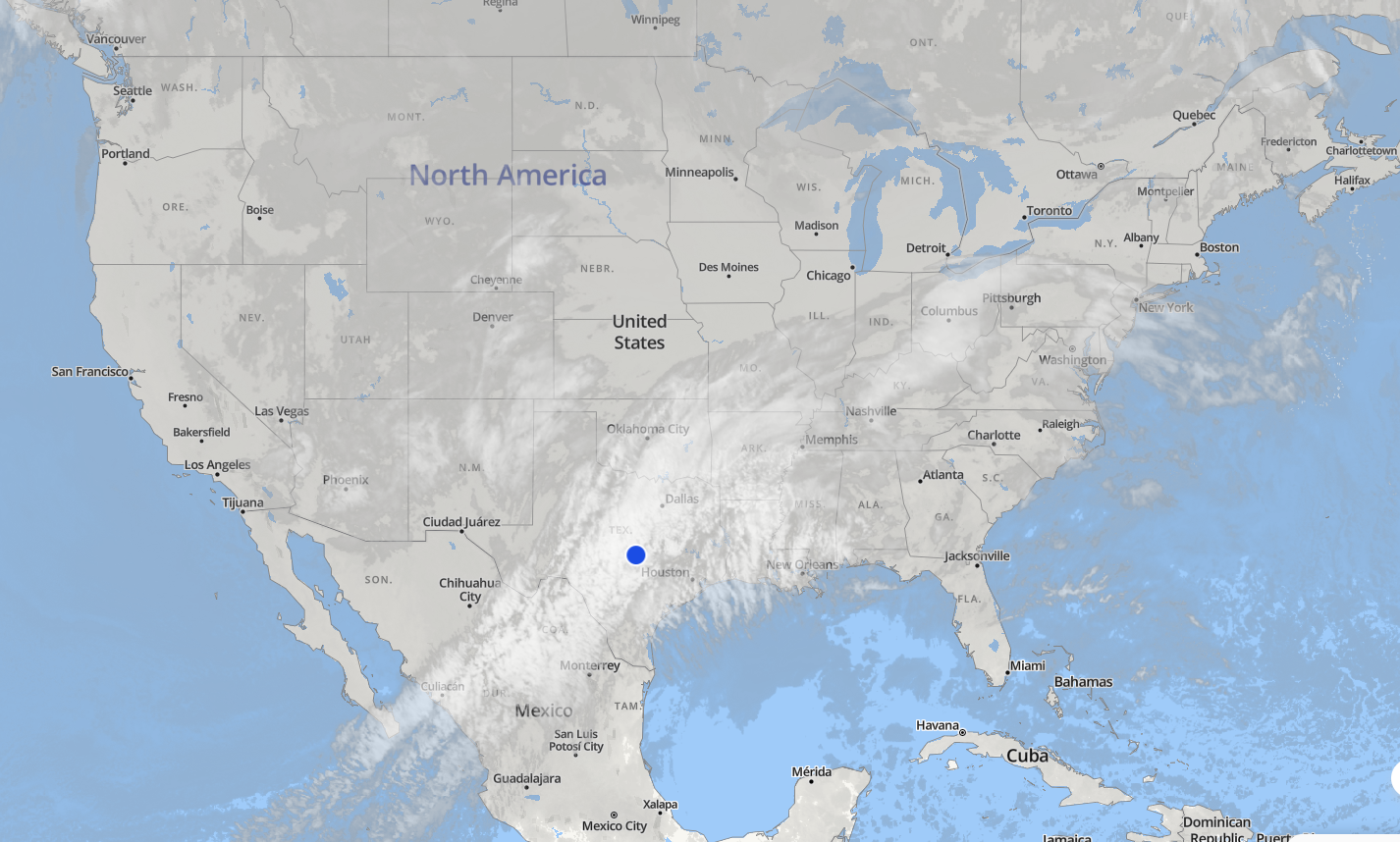

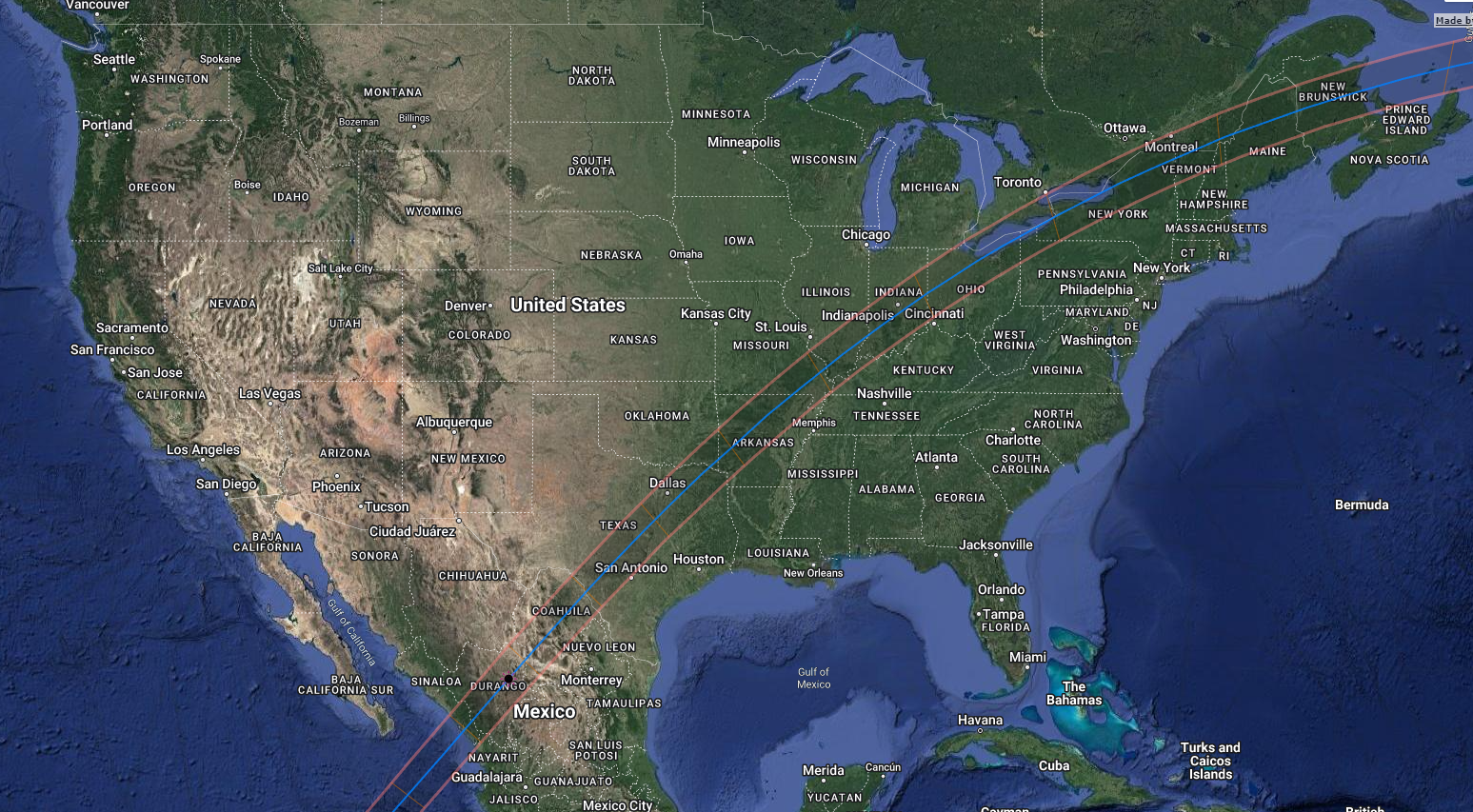

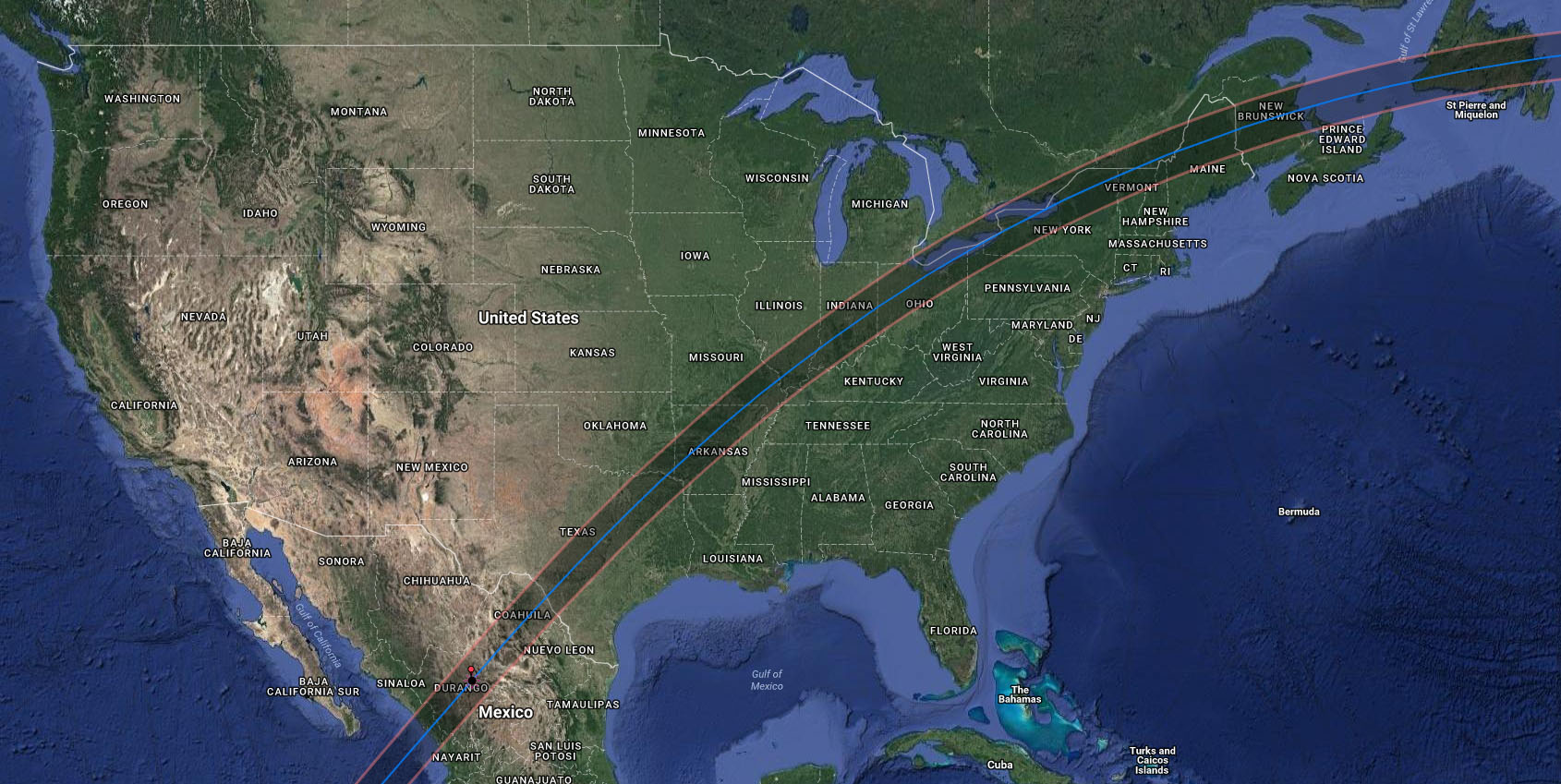

The next great total solar eclipse is now less than a year away, with a path from Mexico, up through Texas and much of the Eastern United States, leaving through northern New York and Maine. Given the rarity of such an event many people will be planning to make the trip to see the eclipse somewhere along the path. While the rest of the country will see a partial (penumbral) eclipse, only those along the marked path will see the Sun totally blocked by our Moon. Having had the opportunity to see the 2017 Total Solar Eclipse from Ravenna Nebraska, I have to say that it is an indescribable experience that you have to see for yourself!

The next great total solar eclipse is now less than a year away, with a path from Mexico, up through Texas and much of the Eastern United States, leaving through northern New York and Maine. Given the rarity of such an event many people will be planning to make the trip to see the eclipse somewhere along the path. While the rest of the country will see a partial (penumbral) eclipse, only those along the marked path will see the Sun totally blocked by our Moon. Having had the opportunity to see the 2017 Total Solar Eclipse from Ravenna Nebraska, I have to say that it is an indescribable experience that you have to see for yourself!

Here in Central Texas, we will get a beautiful view of a total solar eclipse across most of the Hill Country, with the centerline crossing through (or very near) Kerrville, Fredericksburg, Enchanted Rock State Park, Lake Buchanan, and Lampasas, continuing on through Gatesville, Hillsboro, Ennis, Sulphur Springs, and Clarksville. Most of Austin , Dallas/Fort Worth, Waco, and about half of San Antonio will also experience at least a short period of totality.

Path of 2024 solar eclipse through Texas.

Path of 2024 solar eclipse through Texas.

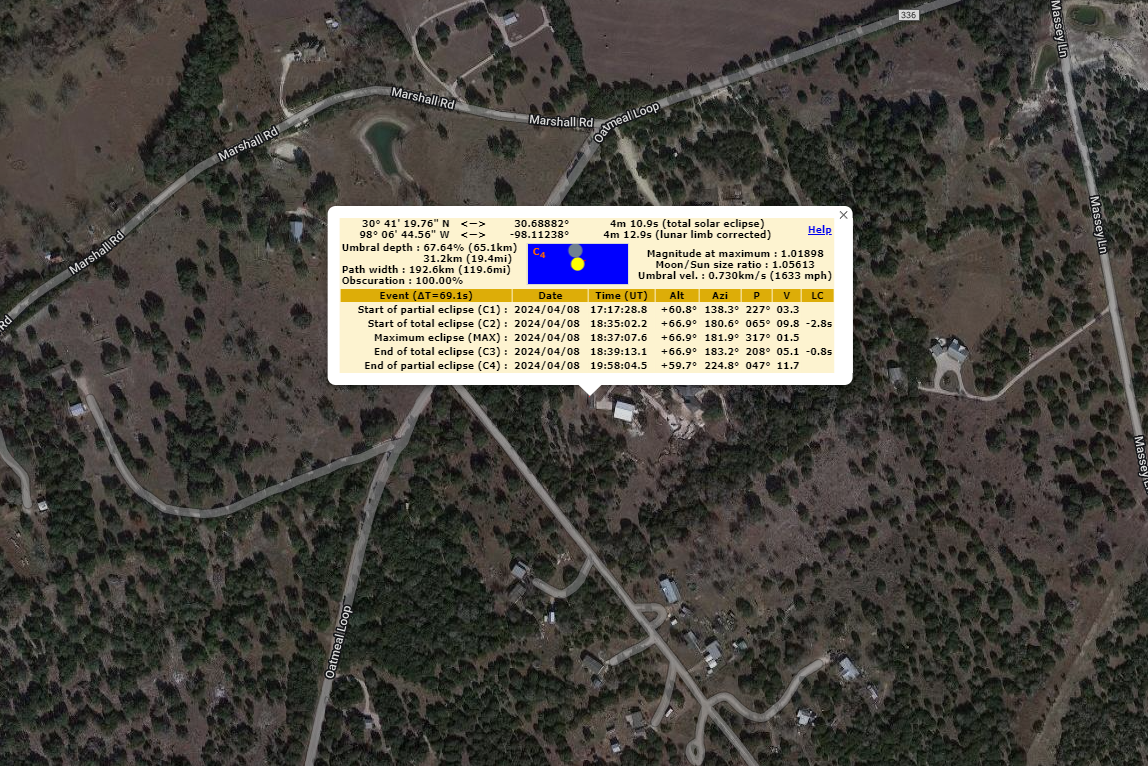

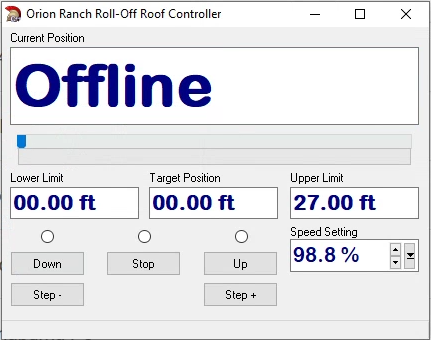

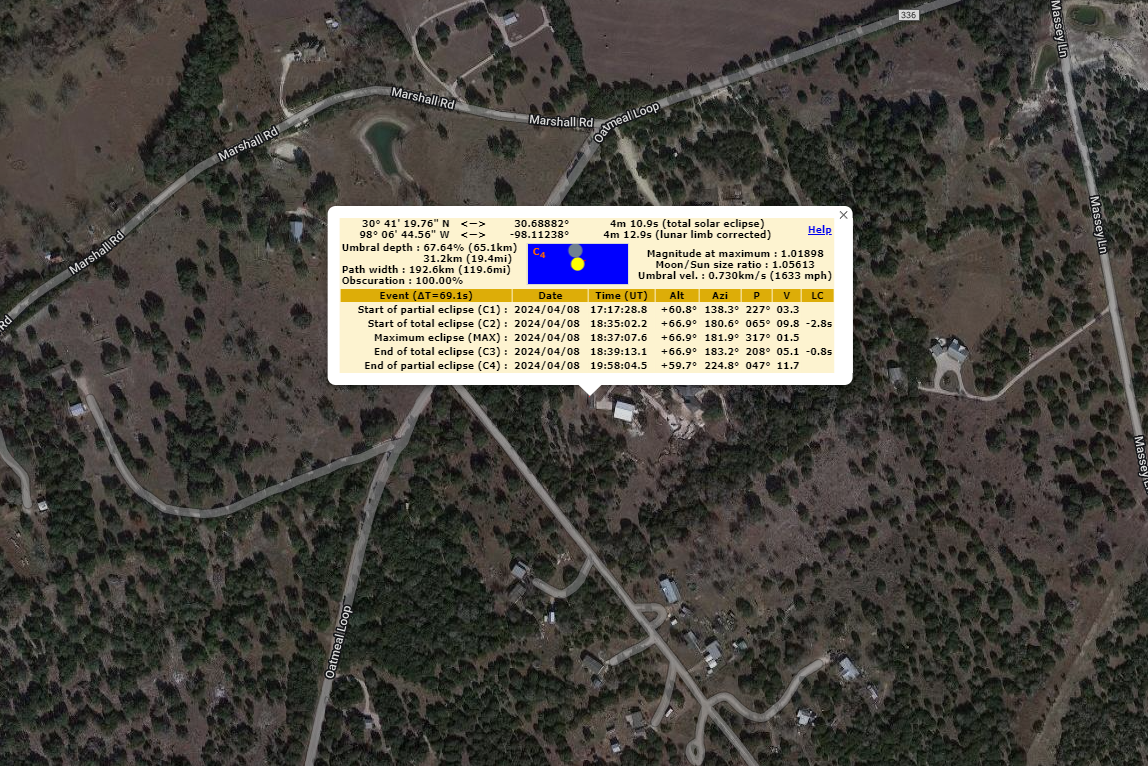

Orion Ranch Observatory is situated about 19.5 miles off the centerline, so we’ll have almost four minutes and eleven seconds of totality compared to the 4:24.7 on the centerline. For the extra 14 seconds of totality, it’s really not worth fighting with moving all my equipment to the centerline, assuming the weather holds. Based on our weather this year, I’d say we’re about a 50:50 chance of being clear vs. cloudy, which matches the predictions for the area based on twenty years of data (see below). Still, Texas is the best chance in the country and only western Mexico would be better. We’re hoping to have a big event here (fill out the contact form if you’re interested in joining us), but I plan to look for options within driving distance, just in case. My uncle’s farm is down by Fredericksburg, so I’m somewhat covered to the south, and Hillsboro would be easy to get to going north. Even further gets us near Paris, TX, where my wife grew up!

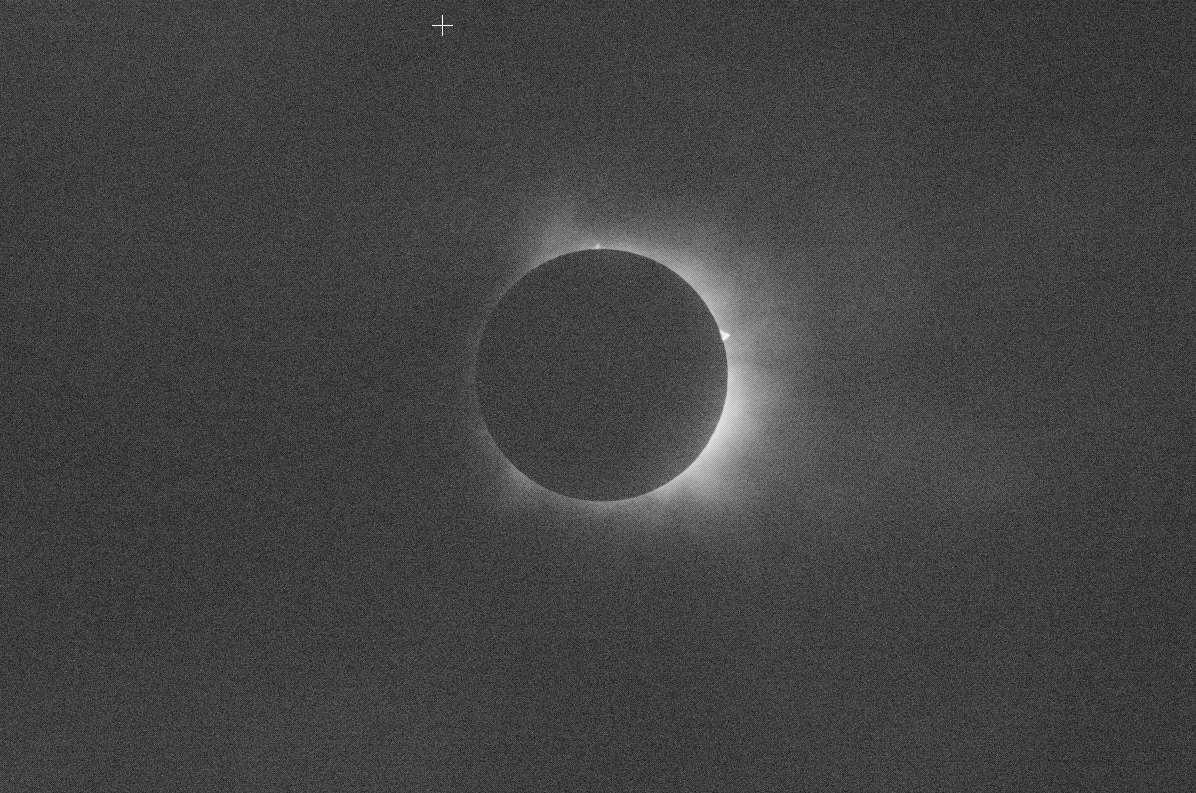

Orion Ranch Observatory Totality

Orion Ranch Observatory Totality

There are plenty of other excellent opportunities for visitors to the hill country. The Eagle Eye Observatory (formerly the Austin Astronomical Society’s dark sky site) at Canyon of the Eagles on the north side of Lake Buchanan is just past the centerline, which passes right through the Burnet County Park on the north side of the lake. There’s another park on the south side which is also on centerline.

Lake Buchanan and Surrounding Area including Oatmeal, (home of Orion Ranch)

Lake Buchanan and Surrounding Area including Oatmeal, (home of Orion Ranch)

About half way between Burnet and Fredericksburg is Enchanted Rock State Park. I doubt they’d let anyone set up a telescope on the rock, but imagine the view from up there and watching the oncoming shadow! There appear to be some neat places to stay around the area as well.

Enchanted Rock State Park and area.

Enchanted Rock State Park and area.

Enchanted Rock

Enchanted Rock

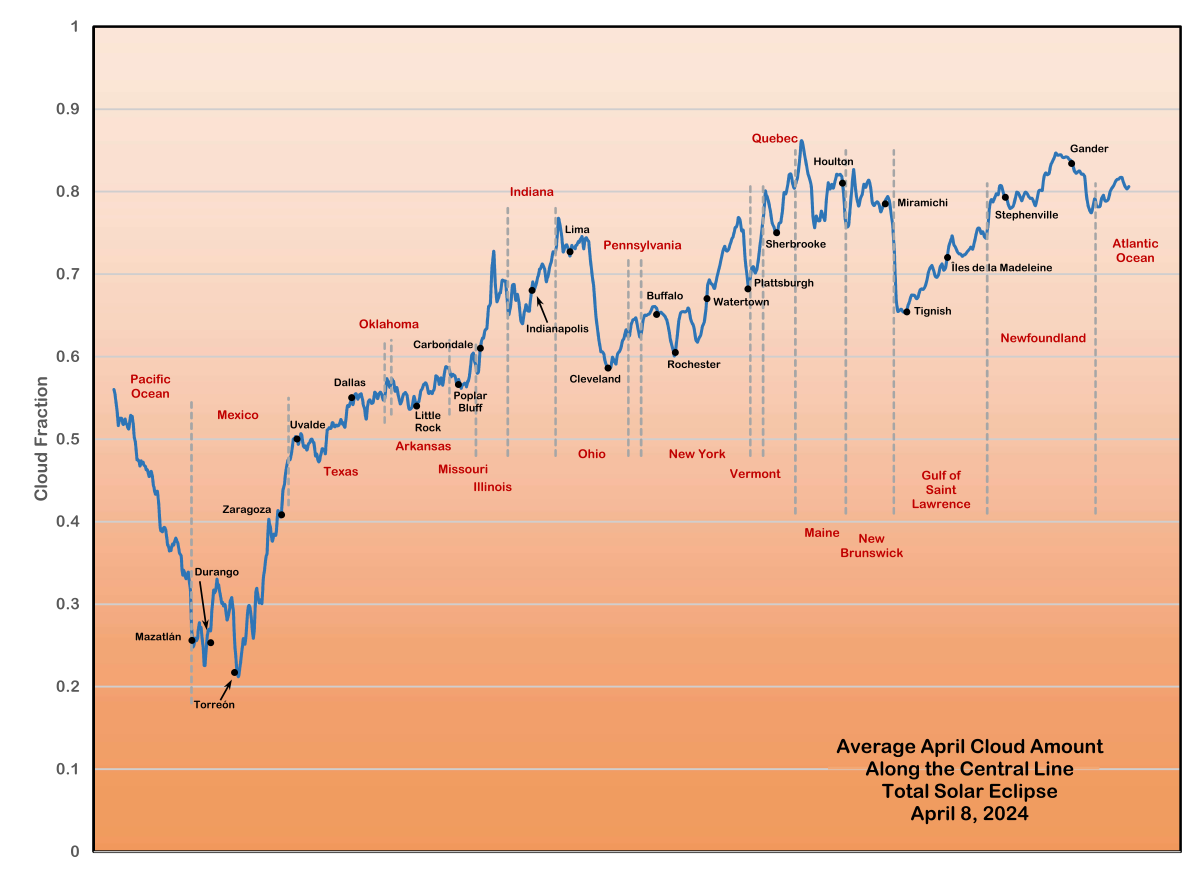

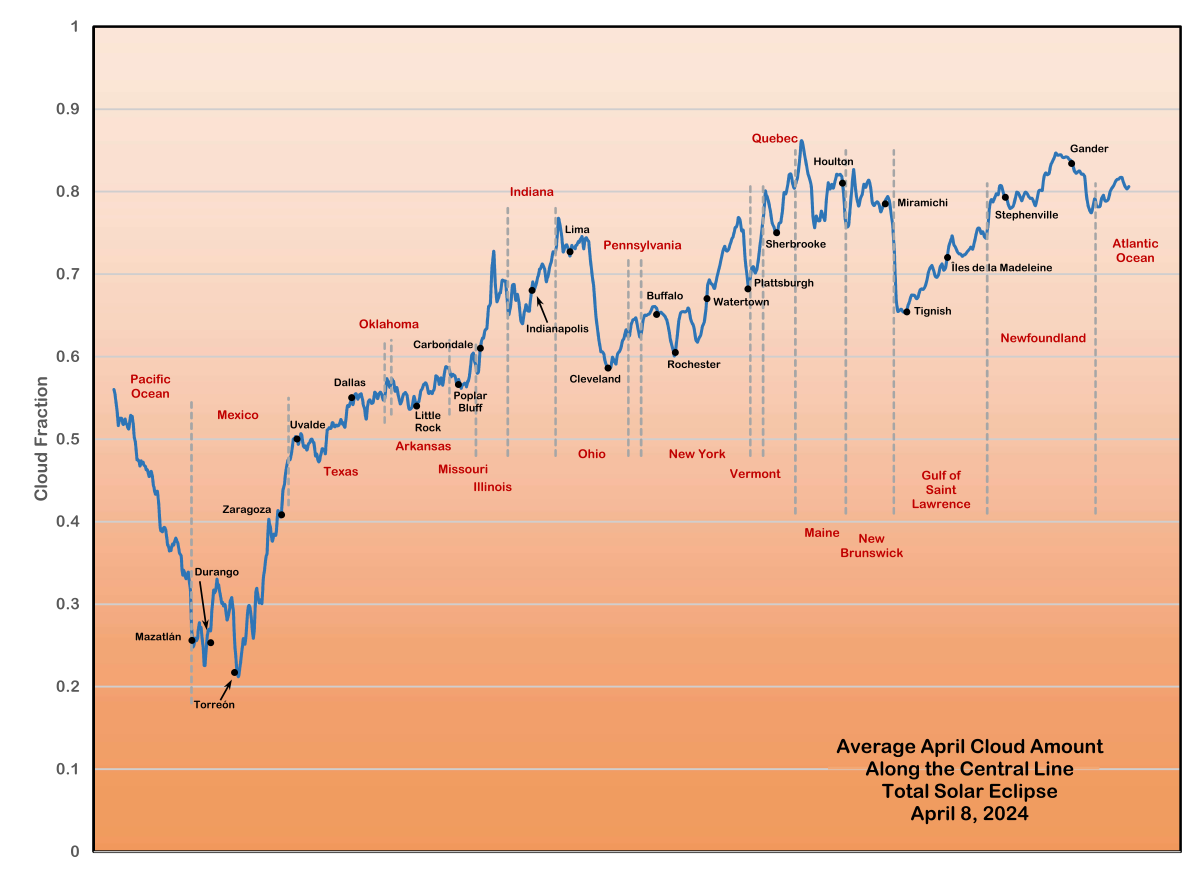

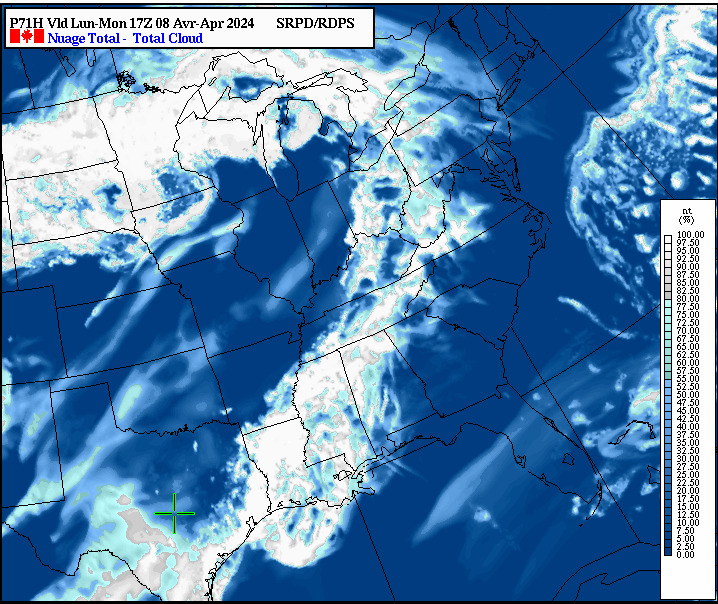

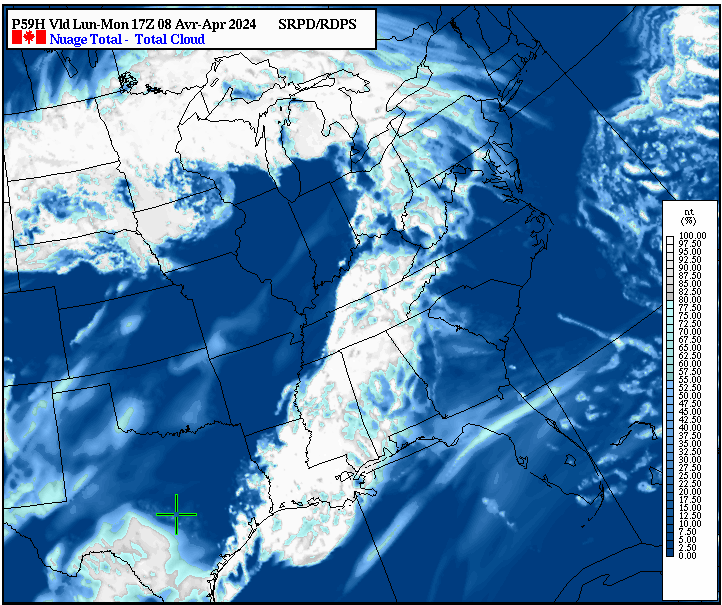

Now, as to weather, Jay Anderson has an excellent website that covers the weather forecast for the entire path of the eclipse. Below are a couple of his images showing what we can expect. As my own experience predicted, we’re at the 50% chance mark through most of Texas for that time of year, but that’s better than the rest of the country!

Cloud Fraction Map for April

Cloud Fraction Map for April

Cloud Fraction Chart for April

Cloud Fraction Chart for April

It’s easy to find information on the eclipse with a simple web search, but eclipse2024.org has put together a very good set of resources and links, including viewing locations and an article on why you must see the total eclipse and not just a partial eclipse. There’s also plenty of good information on viewing and safety, as well as links to buy viewing glasses. There’s also information there on this year’s annular eclipse in October. It will be passing just south of Fredericksburg on a Saturday, so I’ll probably try getting down there if I can!

So happy observing! I hope you get a chance to see something like this as it’s something you’ll never forget. Feel free to contact us if you’re interested in attending our event at Orion Ranch Observatory. And always remember:

Never look at the sun without eye protection!

Enchanted Rock

Enchanted Rock